Under Pressure (To Experiment With AI)

When permission isn’t the same as safety

🌸 ikigai 生き甲斐 is a reason for being, your purpose in life – from the Japanese iki 生き meaning life and gai 甲斐 meaning worth 🌸

Diamonds are made under pressure.

So is graphite… and so is dust.

Same force. Same carbon. Different conditions, completely different outcomes. The difference is what surrounds you when the weight comes down.

This has been on my mind as I’ve been digging into research on AI’s impact at work this week. The stats tell mixed stories and nobody knows for certain what the next decade holds… but AI will for sure continue to change how lots of us do things, and I want to be equipped to help people through that in ways that work for how real humans actually learn effectively... not how tech bros or consultancy reports would like them to *grin*.

Section AI looked at 4,500 work-related AI use cases across 5,000 knowledge workers and they found that only 15% are likely to generate any return on investment, 59% classified as basic one-off tasks and a mere 2% qualify as advanced.

Meanwhile, a BCG and Columbia Business School survey found that 76% of executives believe their workforce feels enthusiastic about AI adoption, whereas only 31% of individual contributors agreed!! And Slalom’s 2026 research found that 68% of people said they can keep pace with AI, yet 93% reported that barriers like underdeveloped skills and inadequate training limit their progress.

People simultaneously saying “I’m fine” and “I don’t have what I need.” If you’ve ever been that human trying to look competent while privately drowning, that will feel painfully familiar.

🎶 Pressure, pushing down on me, pressing down on you 🎶

In defence of graphite

Maybe 59% of people doing basic AI tasks isn’t the crisis the headlines want you to think it is.

Graphite is made from the same carbon as diamonds. It’s what makes pencils work. It conducts electricity. It lubricates engines. It’s genuinely useful. Not every AI interaction needs to be a diamond. Sometimes you need a pencil to sketch or write a rough draft, and there’s nothing wrong with that.

The problem comes when organisations expect a diamond mine when they haven’t provided the heat, the time or the support those transformations require… and then they’re looking at the graphite and calling it a failure of the carbon.

In some workplaces, “experimentation” doesn’t really mean genuine inquiry. It means visibly performing adaptability inside an innovation story the organisation wants to tell about itself. People are not always being asked to explore, sometimes they’re being asked to look like the sort of person who explores.

Some of those 59% might be doing exactly what they need to do right now. And some of the people who appear slow to adopt AI aren’t scared or behind, they’re correctly unconvinced. They’ve looked at the available use cases, sensed that the tool they have access to is immature for their context or that the promised productivity gains are mostly theatre, and decided to wait. Section AI’s own data supports this... if only 15% of use cases generate ROI, the sceptics might have better instincts than the early adopters performing enthusiasm for their quarterly reviews.

Not all hesitation is deficiency. Sometimes it’s discernment.

Why “just experiment” is a threat, not an invitation

For the people who do want to learn, who are ready and willing... the environment matters hugely.

When an organisation says “we encourage everyone to experiment with AI,” they may very well mean “we’ve given you permission and we’re excited” … but what many employees hear is “figure it out yourself and don’t mess it up”.

Section AI found that the majority of C-suite executives said their company encourages experimentation. When individual contributors were asked the same question? Only 10% agreed.

For many people, the fear is not really the technology. It’s the exposure of becoming a beginner again in a culture that rewards polish.

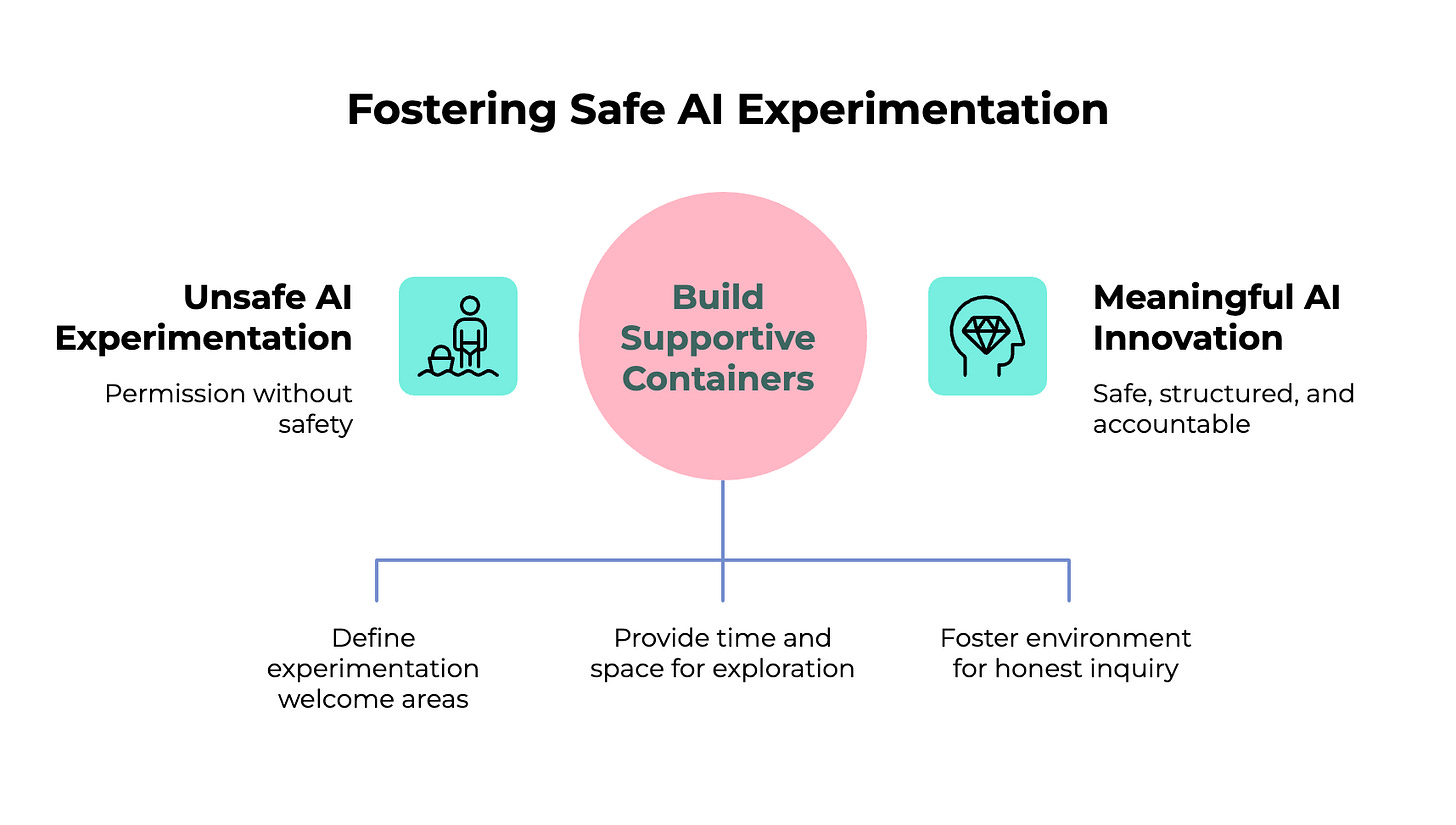

That gap goes deeper than communication, it’s about three things that permission alone can’t fix;

First, boundaries. People need clarity on where experimentation is welcome, where it isn’t, and what good use actually looks like. Without that, “just experiment” produces shadow AI, low-value busywork and a lot of hidden anxiety. BCG’s global survey found that when employees don’t have the AI tools they need, more than half will find alternatives and use them anyway.

Second, time. If people have no slack, there is no real experimentation. A 2026 L&D report found that 41% of employees lack time to learn. Asking an exhausted workforce to “play around” with AI isn’t a perk, it’s uncompensated labour disguised as innovation. Another item on an already overloaded to-do list, except this one comes with the implicit threat that if you don’t do it, you’ll be left behind.

Third, safety. MIT Technology Review and Infosys surveyed 500 business leaders and found that 83% believe psychological safety impacts AI success, but only 39% rate their own organisation’s safety as “very high”... and research published in Nature found that AI adoption can actually reduce psychological safety, increasing depression when the surrounding environment is one of ambiguity or fear.

Boundaries, time and safety. Without all three, “experiment freely” is just unpaid risk transfer from the organisation to the employee.

The people meant to help you are pretending too

The managers tasked with creating these conditions? Gallup found that employees with actively supportive managers are 6.5 times more likely to find their AI tools useful, only 28% of employees strongly agree their manager provides that support.

The concern is that some managers are actually more frightened about AI than frontline workers. BCG found 43% of managers and leaders worry about losing their jobs compared to 36% of frontline employees. They can see how dramatically AI could restructure their organisations and they are supposed to steady everyone else while privately wondering whether the ground beneath them is shifting too.

Managerial pretending is contagious. When a leader champions a tool they don’t fully understand while trying to conceal their own uncertainty, teams learn that performance matters more than honesty. The people who might ask a genuinely useful question if they felt safe to, stay quiet instead.

🎶 It’s the terror of knowing what this world is about. 🎶

I know this because I’ve been the dust

I’m supposed to be the AI person. I coach, speak, teach workshops and write about this every week… and there are many moments when I feel the squeeze. When a new model or tool launches and the gap between what I think I should know and what I actually know opens up. When someone asks a question I can’t answer and I could choose to dress up uncertainty as confidence.

I think I already mentioned the friend who messaged me last year asking where to start with AI. I rattled off diagnostic questions. Have you used Deep Research mode? Do you switch on thinking mode? Written any custom instructions?

Their reply “Looking at the questions you asked I can see I haven’t even scratched the surface”.

I was trying to help, but what I did was measure them against a hidden syllabus and watch their enthusiasm drain away. Pressure, applied with the best of intentions… still dust.

That friend had a reason for wanting to learn. A sense of purpose, a curiosity, a professional goal they cared about… and my first instinct was to assess their technical level rather than ask what mattered to them. I nearly disconnected them from their motivation before they’d even started.

This is where ikigai lives in this conversation. Your reason for being, your sense of purpose at work, is the very thing that makes AI adoption feel so loaded. When someone tells you to experiment with a technology that might reshape your role, they’re not just asking you to learn a tool. They’re asking you to renegotiate your competence, your status, your sense of what makes you valuable. That goes straight to the heart of purpose. No wonder people freeze.

The container matters more than the content

At points in “Under Pressure,” the song almost falls apart.. are the fabulous Mercury and Bowie even performing the same piece anymore? … then it resolves into an unexpected plea for love.

Why can’t we give love one more chance?

Every piece of research I’ve dug through points somewhere similar. The difference between diamonds and dust is care. But care alone isn’t enough, you also need structure.

Psychological safety without direction produces a lovely warm room where nobody quite knows what to do. Direction without safety produces compliance dressed up as progress. The hierarchy matters: safety first, then structure, then accountability. In that order. Skip to step three and you’re just adding pressure to an already fractured container.

I’ve watched this done well in so many communal or cohort based learning constructs, whether a community of practice, a workshop or an office hours drop in. People arrive nervous, someone says “this is probably a stupid question” and someone else says “oh thank goodness, I thought it was just me” The whole room exhales. And then we get into the practical stuff, the use cases, the workflow experiments, the things that actually move the needle.

Near the end of the song Bowie sings This is our last dance. I don’t think it’s our last dance with AI adoption, but why wait to advocate for better choices and framing.

We can keep applying pressure and calling it progress. We can keep mistaking permission for safety and visible activity for meaningful learning.

Or we can build containers that deserve real experimentation and innovation… clearer boundaries, protected time, honest managers, shared practice and room to be visibly new.

Safety first, then structure, then accountability.

Diamonds are made under pressure, but only when something holds you while you change.

Beautiful souls, please share your thoughts in the comments… for example, has anyone ever asked you how you feel about AI at work, rather than what you're doing with it?

Sarah, seeking ikigai xxx

PS ✍️ Here’s a journal prompt for you...

Draw a simple container and inside it write the conditions that help you learn something new. A person who makes you feel safe to be wrong. A physical space. A time of day. A ritual. Now outside the container, write the pressures you currently feel around AI. Look at both. Is there anything inside that you could bring to bear on what’s outside it?

PPS 🤖 An AI prompt to try...

“I’d like to do an exercise based on the idea that meaningful AI adoption needs three things: psychological safety, clear structure and accountability, in that order.

Here’s a list of my regular work tasks: [paste or describe them]

Help me sort these into three categories. First, tasks where AI could save me time without me needing to learn much new (my ‘graphite’ tasks, useful and practical). Second, tasks where AI could genuinely transform how I work, but I’d need support, time and permission to experiment properly (my ‘diamond potential’ tasks). Third, tasks where I might deliberately choose not to use AI because my human judgement, creativity or relationships are the point (my ‘keep precious’ tasks).

For the diamond potential category, help me think about what container I’d need around me to actually experiment well. What boundaries would help me feel clear rather than anxious? How much protected time would I realistically need? Who could I learn alongside so I’m not figuring this out alone?”

Don’t perform competence in the prompt. Be honest. The AI doesn’t care if you’re behind. That’s one of its genuine superpowers.

PPPS 🎶 Today’s soundtrack...

“Under Pressure” by Queen and David Bowie. Two absolute giants who somehow wrote one of the most human songs ever recorded, about fear and love and what we owe each other, and then never performed it live together. A moment in time between two people who understood pressure and transformation.

Listen to it differently today. Listen for the resolution, less a crescendo and more a plead for love.

And because it’s the eve of International Women’s Day and I can’t resist... I’m also sharing a jaw-dropping rehearsal clip of Annie Lennox and David Bowie preparing to perform this at the Freddie Mercury Tribute Concert in 1992 (complete with DB smoking and George Michael singing along in the crowd!). Annie stepped into Freddie’s part and made it entirely her own, this is what it looks like when a powerhouse of a woman meets a song about pressure and absolutely smashes it out of the park.

People were scared of the role calculators would have. Obviously different technologies, but we fear change and unknown. Some lean in and take advantage others hang back.

Only time will tell.

I work in a role that implents AI. We also openly admit some of our initiatives might fail, despite our best efforts. We find this brings down some barriers for our stakeholders, but not always. A nod in the room and smile, but be met with resistance when nobody is watching. Rightfully, there has been distrust sowed out there in the general workforce with how AI will be leveraged to augment our work, vs. completely replace it.