Small Island, Big Kind Policy

From bedroom AI policy drafts to community ikigai experiments

🌸 ikigai 生き甲斐 is a reason for being, your purpose in life - from the Japanese iki 生き meaning life and gai 甲斐 meaning worth 🌸

I did something some people would think of as slightly nuts recently. I drafted an AI policy for the Isle of Man (for fun, in my own time). Not because anyone asked me to, but because I’ve read SO many AI policy docs from around the world that lacked human voices or a sense of the values and ethos of the places themselves… and I wondered about tackling it in a different way.

You know that feeling when you're frustrated about something and everyone keeps saying "someone should do something about that" until you realise you are, in fact, someone? That's also what happened. I care deeply about how artificial intelligence might reshape our lives, and I wanted to understand what it could look like if we started from a place of genuine care for our people’s flourishing and an even better Manx community, rather than just efficiency metrics and GDP growth.

I also wanted to have a (very) considered opinion to share when consultation opens locally.

The process taught me a lot. Drafting policy forces you to step into other people's shoes. What does AI mean for my elderly friend who still struggles with her smartphone? For my friend who's an artist and terrified her work might become obsolete? For parents trying to work out how to protect their children from things they don't fully understand themselves? For all our amazing small businesses and community organisations?

Most docs read like they were written by algorithms for algorithms. All productivity this, economic growth that, competitive advantage the other. Where's the bit about helping humans feel purposeful? Where's the recognition that efficiency isn't the same as meaning, and that sometimes the slower, more human way of doing things is actually better for us?

The superpower of being small

I realised while wrestling with all this that we don't have to wait for others to figure it out for us. Small places, whether that's my Island home with our 85,000 people, or your village, or your neighbourhood… have something the big players don't. We're accountable to each other in ways that matter.

Big tech says "move fast and break things." But in a small place where policy changes show up in our supermarket queue conversations, we can instead move kindly and fix things.

If we make bad decisions we bump into the people affected at the Co-ey. That changes how you think about consequences. You can't hide behind bureaucracy when Mrs Cannell from down the road will tell you exactly what she thinks of your bright ideas.

We should also be able to adapt quickly. We don't need to convince millions of people or navigate seventeen layers of approval. When something isn't working, we can change course. When something is brilliant, we should be able to double down without waiting for a committee to form a subcommittee.

It excites me to think of small communities modelling approaches that bigger ones can learn from. The Isle of Man has always been a testing ground and we can do the same with AI, show what it looks like when real communities shape technology adoption rather than just accepting whatever Silicon Valley decides is good for us.

We can also ALL of us do that on even smaller scales too, in our own communities and organisations.

Questions that matter for AI adoption

While thinking about what I want to see in AI policy, I was sketching frameworks that can help us, perhaps an Ikigai Index… something to assess ethical community adoption of AI tools beyond the usual procurement metrics;

Meaning - Does it help our people do what genuinely matters to them?

Mastery - Does it increase capability and reduce stress?

Connection - Does it strengthen trust and relationships?

Contribution - Does it add value to the wider community?

I've been experimenting with a simple survey people can use after interacting with any AI system, five quick questions;

"Today, this service helped me do what matters."

"I felt more capable and less stressed using it."

"I felt respected and understood."

"I can see how this benefits our community."

"I'd choose this again."

Take an AI tool for learning and practicing Manx Gaelic, designed well it could score highly across all measures. It would help preserve something meaningful, work alongside human teachers rather than replacing them, connect people to cultural heritage and serve the whole community.

Compare that to tools like character AI being marketed to children as companions. It fails the connection test, substituting artificial relationships for real ones. It fails the contribution test, encouraging self-absorption rather than community engagement. It's solving a problem we shouldn't have rather than addressing why children feel they would want AI “friends”.

Another way we can assess an AI system;

Could you explain what the AI system does in a few minutes?

Can a human step in easily when things go wrong?

Is there a visible way to raise concerns without bureaucratic nightmares?

If a system fails these basic questions, should it be touching people's lives?

Community immunity (how to collectively say no and discuss without sounding nuts)

The beautiful thing about communities is that we can decide things together. We can develop what I think of as "community immunity", collective antibodies against some of the noise and nonsense.

Maybe your village values human creativity too much to let AI-generated art dominate the local festival. Maybe your school board insists any automated system must have a human backup, because second chances matter. Maybe your parish council recognises that algorithms designed to keep people scrolling endlessly are fundamentally at odds with encouraging real-world community engagement.

These aren't anti-technology positions. They're pro-human positions. They come from communities that have done the work of thinking through what they actually want technology to do for them, rather than just accepting whatever's on offer.

The key to having these discussions without coming across as either a conspiracy theorist or a Luddite is simple, actually spend time using AI tools. I mean properly using them, not just asking ChatGPT to write you a limerick about your cat.

A ragdoll named Saffy, seventeen

Has the grumpiest face ever seen

She yowls for her dad

And Dreamies (when had)

Though her memory's no longer pristine.

Understand what they can and can't do. Play with them. Break them a bit. Then you can have informed conversations about both their potential and their limitations, rather than fear-based reactions or uncritical enthusiasm.

You can then bring to your next community meeting curiosity about how these tools might genuinely help people in your area, balanced with thoughtful questions about protection, bias, values and choice.

How do we safeguard vulnerable people?

Whose voices are missing from these systems and decisions?

What makes our community special, and how do we preserve that?

How do we ensure people maintain agency and alternatives?

Here's a practical demand you could make if any AI system touches public services. It should come with a "System Card", like nutritional labels for food, but for algorithms. One page, readable in under a minute, explaining what it does, where the data comes from, who can intervene when things go wrong, known risks and a real contact point. Not unreasonable to expect it to be explained clearly enough for you to understand while standing at a counter, if it’s deemed ready to use on people.

And please, don't just focus on the scary stuff. Think about all the myriad of ways that AI might genuinely help. Maybe it's supporting us in making healthier life choices, or helping small local businesses compete with larger ones, or creating personalised learning experiences for children. The aim is to be thoughtful about what serves your community and what doesn't.

From personal agency to collective power

The same bloody-minded determination that made me sit down and write my own draft of an AI policy can be scaled up. When enough people in a place decide they're not going to let just anyone design their future without input, remarkable things become possible.

Your community can develop its own principles for how and when technology gets adopted. Your neighbourhood can insist that any AI system used locally serves human flourishing, not just efficiency metrics. You could even become a place where tech companies have to prove their tools really help people, without harming others.

I'm not suggesting we retreat into digital isolationism or reject beneficial technology. I'm suggesting we get better at distinguishing between technology that serves us and technology that serves itself. Between innovation that enhances human capability and innovation that diminishes it.

The future shouldn’t just be decided in boardrooms. It's better shaped in the places where people live their lives, by people who care enough to engage thoughtfully with these questions rather than just accepting whatever they're offered.

Your community has more influence than you think. Your voice matters more than you know. And your values, the things that make your place worth living in, should be the compass that guides progress.

AI is very likely to change our communities, we should have a say in how.

What matters most where you live? And more importantly, what are you going to do about nurturing it?

Thank you for reading, beautiful souls. Your stories and reflections in the comments matter, let's keep talking about and designing communities, online and off, that honour the beautiful complexity of being human in a rapidly changing world.

Sarah, seeking ikigai xxx

PS - Here are some bullet journal prompts to explore your own community agency this week:

Community Inventory: What makes your local area special? What would you fight to preserve? What needs improvement?

The Ikigai Index: Pick one piece of technology you use regularly and score it 1-5 on: Does it help you do what matters? Make you feel more capable? Feel respected? Benefit your community? Would you choose it again?

Your Village Voice: What's one local meeting, forum, or group you could attend where these conversations might happen? What would you bring up?

30-60-90 Pilot Design: If you could propose one small AI experiment for your community, what would it be? Design a mini-trial: 30 days to try something small, 60 days to adjust based on feedback, 90 days to decide whether to continue, scrap, or redesign it.

PPS - Try this AI prompt for your own community thinking;

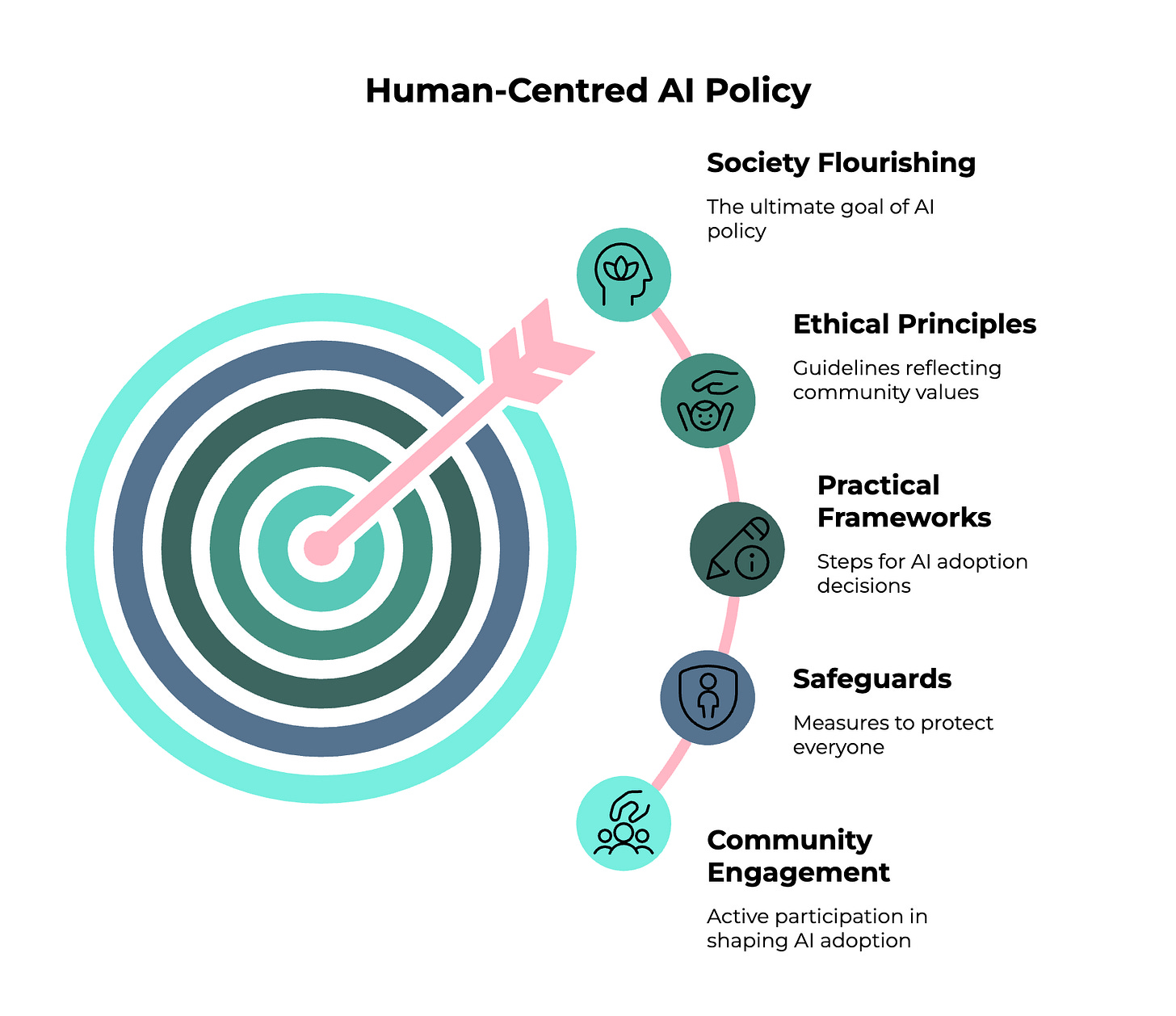

“Help me draft an AI policy for my [club, organisation or community type and context]. Walk me through creating principles that reflect our values, practical guidelines for AI adoption decisions, and safeguards for our stakeholders. Then stress-test this framework by helping me think through: How would these principles work if scaled up to city/regional/national level? What tensions emerge between local values and broader implementation? Where might our approach conflict with or complement larger policy frameworks? Give me a charter that works for us now but also contributes thoughtfully to the bigger conversation about human-centred AI policy direction.”

PPPS - This week's soundtrack has to be "The Times They Are A-Changin'" - Brandi Carlile's cover of Dylan's classic. Her gorgeous voice carrying that message of inevitable change and old powers giving way to new voices. The song captures exactly what this essay is about… the recognition that change is coming whether we participate in shaping it or not, but when communities come together with intention and values, they can be part of directing that change rather than just being swept along by it.

This is an admirable goal.

Very interesting.

Starting with empathy and community matters. But I couldn't help noticing the absence of the actual policy draft you mentioned writing.

Your essay focuses on the theater of process (indexes, consultations, frameworks) rather than the substantial bits of enforcement.

Meanwhile, the reality is that models are already running in people’s basements.

AI has already diffused beyond traditional governance rituals.

All the community surveys in the world can't change the fact that anyone with a GPU can run a model offline.

There's no easy way to get the genie back in the bottle at this point. That's the tension worth wrestling with.